A Treatise on Data Businesses

Data businesses are generally misunderstood. (That is an understatement).

I’ve spent the last 13 years running data companies (previously LiveRamp (NYSE:RAMP) and now SafeGraph), investing in dozens of data companies, meeting with CEOs of hundreds of data companies, and reading histories of data businesses. I’m sharing my knowledge about data businesses here — written primarily for people that either invest or operate data businesses. I put this together because there is so much information on SaaS companies and so little information on DaaS companies. Please reach out to me with new information, new ideas, challenges to this piece, corrections, etc. And please let me know if this is helpful to you. (this is written in mid-2019).

DaaS is not really SaaS … and it is not Compute either

Data businesses have some similarities to SaaS businesses but also some significant differences. While there has been a lot written about SaaS businesses (how they operate, how they get leverage, what metrics to watch, etc.), there has been surprisingly little written about data businesses. This piece serves as a core overview of what a 21st-century data business should look like, what to look for (as an investor or potential employee), and an operational manual for executives.

In the end, great data companies look like the ugly child of a SaaS company (like Salesforce) and a compute service (like AWS). Data companies have their own unique lineage, lingo, operational cadence, and more. They are an odd duck in the tech pond. That makes it harder to evaluate if they are a good business or not.

Everything today is a service — data companies are no exception

Almost all new companies are set up as a service. Software-as-a-Service (like Salesforce, Slack, Google apps, etc.) has been on the rise for the last twenty years. Compute-as-a-service (like AWS, Google Cloud, Microsoft Azure, etc.) has become the dominant means to get access to servers in the last decade. There are now amazing API-as-a-service businesses (like Twilio, Checkr, Stripe, etc.). And data companies are also becoming services (with the gawky acronym “DaaS” for “Data-as-a-Service”).

Data is ultimately a winner-takes-most market

Long term (with the caveat that the markets work well and the competitors are rational), a niche for a Truth Data business should only have one dominant player. If two companies sell the exact same data, the data becomes a commodity and both companies should logically go out of business. So data companies either need to focus on a different niche or focus on a different truth. If companies are selling the exact same data, there will eventually be a price war. (Note: this is why lowering friction to evaluate the data is so important for the winner to capture the market — things like self-serve, and integrations become very important).

Of course, some data markets have no dominant player and are hyper-competitive. These are generally either very early markets where no one has yet emerged or they are legacy markets where the competitors are not yet behaving rationally.

Data is a growing business

One of the biggest themes in the last ten years has been products that help companies use first-party data. These include storage (like Databricks, Cloudera), middleware (LiveRamp, Plaid), BI (Tableau, Looker), data processing (Snowflake), log processing (Splunk), and many, many, many more. (note: as a reminder about the power of these tools … while I was writing this post, both Tableau and Looker were acquired for a total price for almost $20 billion!)

These products help companies manage their own data better.

The amount of collected first-party data is growing exponentially due to better tools, internet usage, and more and more of our world moving from the physical to the digital.

More and more people are comfortable working with data. “Data Science” is one of the fastest growing professions in the world. KDNuggets reports “in June 2017, the Kaggle community crossed 1 million members, and Kaggle email on Sep 19, 2018 says they have crossed 2 million members in 194 countries.” IBM estimates that the number of people in data science is growing faster than 20% per year.

First party data is not enough

But unless your company is Google, Facebook, Apple, Amazon, Tencent, or 12 other companies … even after you leverage all your first-party data, your models will need external data.

Even five years ago, very few companies were equipped to leverage external data. Most companies still aren’t. But over the next ten years, more and more companies will have the infrastructure and the talent to use external data to build more truth and better predictions. At least, that is the bet.

There are an order of magnitude more data buyers today than there were five years ago.

IAB reports that even buying marketing audience data (which is traditionally the least accurate of all data) is a massive business and growing.

Nonetheless, there are still very few data buyers today. Most companies want applications (answers) rather than data (ingredients). The only reason to start (or invest in) a data business today is if you believe the number of data buyers will grow by at least 10x over the next decade.

Data companies are backward looking

Data companies are ultimately about selling verifiable facts. So data companies collect and manufacture information about what happened.

Data companies are about truth. They are about what happened in the past. So much so that SafeGraph’s motto is “we predict the past.”

Of course, being accurate (even about verifiable facts) is really hard (more on that later in this post). There is no possible way to get to 100% true.

While data companies are about truth, prediction companies (like predicting fraud, predicting credit, predicting who will buy a product) are about religion. I’ve previously written about the difference between truth versus religion and data versus application.

Truth companies focus on facts that happened and Religion companies use those facts to help predict the future. One way to think about the market is that Religion companies often buy from Truth companies … and Application companies often buy from both Truth and Religion companies. SafeGraph (a Truth Data company) has a lot of customers that are applications or religions.

It is really important that data is true

One of the weird things about data companies historically is they often failed on one core value: veracity. They spent their efforts on coverage (recall) and did not focus enough on accuracy (precision).

There is a huge trade-off between precision (accuracy) and recall (coverage). In the past, most data buyers cared about coverage. But as models become more and more important, the input data needs to be accurate. We are moving from a world of “approximate truth” to “truth.”

Not too long ago, much of the best data was actually compiled by hand. Some of the biggest data companies in the world have thousands of people in low-cost countries manually verifying and entering data.

As companies rely more and more on data (and build their machine learning models on data), truth is becoming a more important requirement than coverage.

One thing to look for in a data company is its rate of improvement. Some data companies actually publish their change logs on how the data is improving over time. The faster the data improves (and the more the company is committed to truth), the more likely the company is to win the market long term.

The three pillars of data businesses: Acquisition, Transformation, and Delivery

SafeGraph’s President and co-founder Brent Perez always likes to remind me that there are only three core things a data company does:

- Data Acquisition

- Data Transformation

- Data Delivery

First pillar: Data Acquisition is about bringing in raw materials

There are lots of ways for companies to acquire data and every data company needs to be very good at at least one of these:

- Data co-op: getting your customers to send you the data (usually for free) in return for analytics on the data. For instance, a salary co-op might get payroll data from companies in return for letting those companies see what their competitors are paying.

- BD deals: creating strong long-term business development deals to get data. These often take a long time to close and can be expensive (see Margins for data businesses initially look very bad (below)). Datalogix (which sold to Oracle in 2015) did a great job of acquiring auto data through a long-term partnership with Polk.

- Public data: One example of companies that compile great data are search engines (like Google). They crawl the public web and make sense of it.

2nd Pillar of a Data Business: Data Transformation

Your data acquisition might come from thousands of sources. You need to fuse the data together and make it useful. Even if you get your data from a few BD deals, you eventually want to graph the datasets together to find the truth. One of the ways to build a high-margin data business is by getting very good at transformation.

Some transformations might be simple (like local time to UTC) and some might be extremely complicated (joining two datasets together where there is no common key). Questions you want to ask include: How do you join all of the data sets together? What is your “key”? How do you resolve two entities that might be the same? (Identity resolution). How do you deal with contradictory data? How do you deal with outliers? How do you normalize the data? (schemas/ontologies).

If the data company is employing machine learning (and most good data companies are), this is the section where the machine learning happens.

Data transformation is very difficult. As Arup Banerjee, CEO of Windfall Data, reminded me: “You can’t just fix a bug with a simple fix — you can certainly manually fix one record, but if you have billions of records, you have to build systems that automatically resolve these errors.”

3rd Pillar: Data Delivery is about how the customer gets access to the data

Is it an enterprise solution where they get a big batch file (via an s3 bucket or SFTP)? Is it an API? Is it a self-serve UI? What integrations with existing platforms (i.e. SFDC, Shopify, etc.) do you have?

Does the data come streaming in real-time? Or is the data compiled monthly? It is reliable and timely? Is the data well documented and well defined? Or does it contain inscrutable columns and poor data dictionaries? Does the data document its assumptions and transformations? Are there “hidden” filters and assumptions? Is the data organized into schemas and ontologies that make sense and are useful? Is it easy to join with other data sets?

Great data companies unify on a central theme

Data companies need to get leverage and so the data should ultimately fit together with a common key. This is not only true for data companies, great middleware companies also should have a central theme. Of course, the best themes are ones that everyone understands, are big enough to collect lots of interesting data, and have good “join keys” with other data themes.

The biggest themes of data business are core concepts that make up our world:

- People

- Products

- Places

- Companies

- Procedures

Tying static data to time

Data on these static dimensions (people, products, companies, places, etc.) become more valuable when crossed with time.

For instance, charging for real-time traffic data can sometimes be more valuable than charging for a map. Maps are fairly static, but traffic changes every second.

Another example of time crossed with the physical world is weather data — it changes all the time and is vital for many consumers and industries. In a place like San Francisco, temperature data changes based on time AND based on micro-location (place).

One of the classic temporal datasets is price per stock ticker per time. That dataset is vital to any trader. In fact, much of the most valuable data is tied to pricing over time. Examples include commodity pricing, the Economist’s Big Mac Index, etc.

Linking datasets together makes the data much more valuable

Data by itself is not very useful. Yes, it is good to know that the American Declaration of Independence was signed on July 4, 1776. But that fact alone doesn’t tell us much.

One of the big ways that data becomes useful is when it is tied to other data. The more data can be linked (or joined), the more powerful it becomes.

One great join key is time. Nowadays, time is mostly pretty standard (that was not true a few centuries ago). And we even have a global standard for time: UTC. Another join key is location (like a postal code).

The more join keys (and joined data sets) you can find, the more valuable those data become. Let’s consider a simple example. Let’s get data on a ticker and also find out where all the company’s stores are. Now let’s find out how many people go into each store. Now let’s find out the weather at each store. Now let’s find out how many of those people bought something.

As you keep joining data, the number of questions you can ask grows exponentially. As the amount of data grows, the number of questions you can answer grows exponentially.

Which means that if the value of Dataset A is X and the value of Dataset B is Y, the value of joining the two datasets together is usually much greater than X+Y.

Building keys into your data so that they are easier to join: make it SIMPLE

Your data will be much more valuable if you enable it to be joined with other datasets (even if you are not the one doing the joining). Most people think that they need to hoard the data. But the data increases in value if it can be combined with other data.

One way to make data easy to combine is to purposely think about linking it — essentially creating an ID or a foreign key that can be used by other datasets.

The SIMPLE acronym for data companies — ID or foreign key.

- Storable. You should be able to store the ID offline. For instance, I know my SSN and my payroll system stores it.

- Immutable. It should not change over time. An SSN on a person is usually the same from birth until death (except in very rare cases).

- Meticulous (high precision). The same entity in two different systems should resolve to the same ID. It should be very difficult for two entities to have the same ID.

- Portable. I can easily move my SSN from one payroll system to another.

- Low-cost. The ID needs to be cheap (or even free). If it is too expensive, the transaction costs will make it hard to use.

- Established (high recall). It needs to cover almost all of its subjects. An SSN covers basically every American taxpayer (and almost all American citizens).

Creating a SIMPLE key to combine your data to other datasets is the most important thing you can do to build a winner-takes-most market in a data niche.

I’d like to see a world where organizations are actively encouraged to share data as more data sharing leads to more open information.

Economics of Data Companies are NOT What They Appear to Be

Margins for most data business initially look very bad

Data companies generally have a lot of trouble attracting Series A and Series B investors because their margins often look terrible. In the early days, they might have to spend significant amounts on COGS (Cost of Goods Sold) for data acquisition (BD deals, etc.). But these “COGS” do not scale with revenue. In fact, they are just step function costs as companies grow. As Michael Meltz, EVP at Experian, reminded me, “incremental margins eventually become extremely attractive at successful data businesses.”

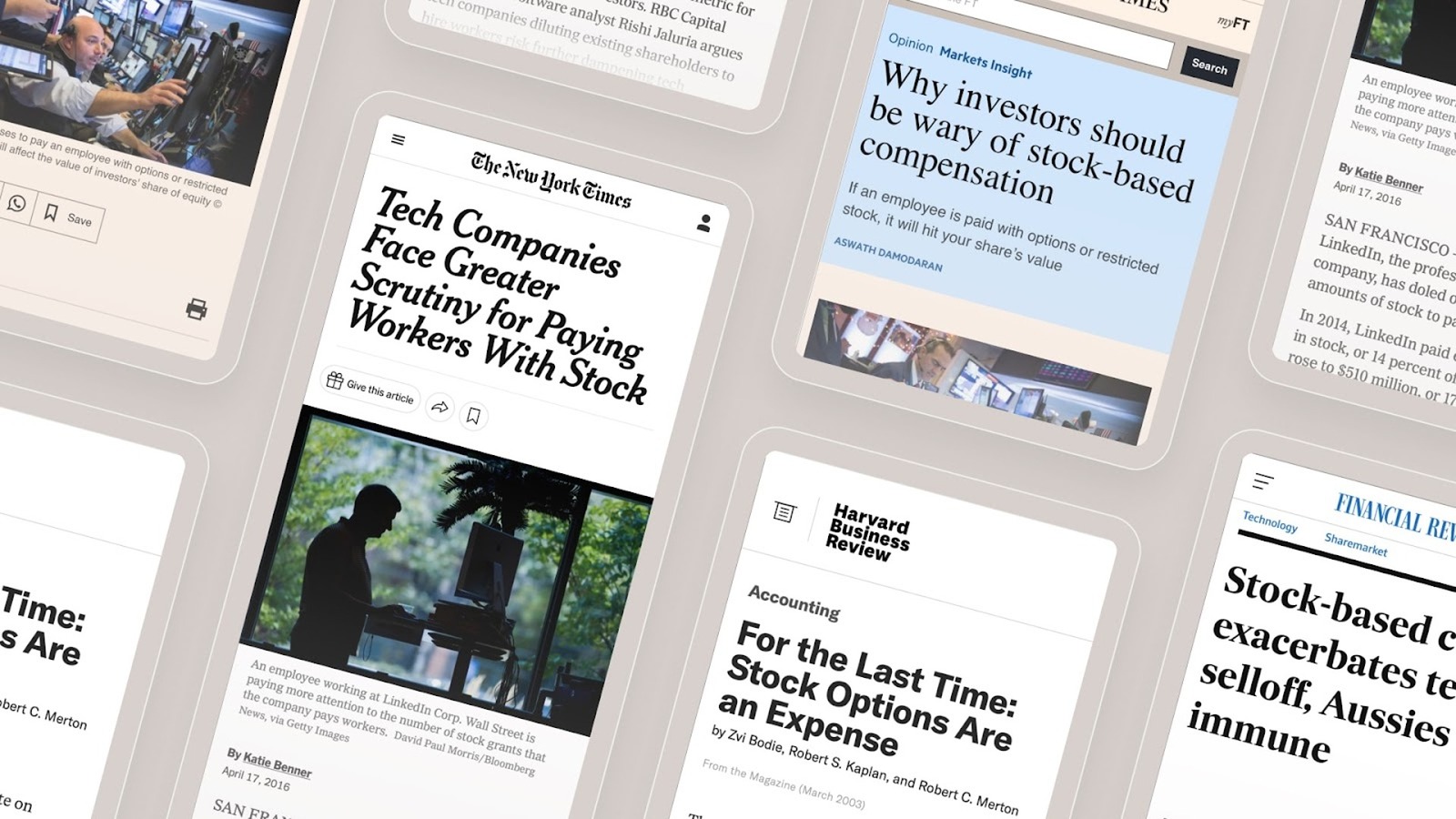

The reality is that data costs are often a long-term asset and they only sit in COGS because of an accounting rule. SaaS companies, by contrast, spend gigantic sums on sales, marketing, and customer success. Most of these costs are also not truly variable, but they sit in OpEx.

In DaaS companies, CACs (Customer Acquisition Costs) tend to decline over time (for the same customer) as the data becomes more integrated into the customer’s workflow.

One way to see this is ARR (annual recurring revenue) per employee. Another thing to look at is net revenue retention. Great DaaS companies look like great SaaS companies on these metrics. A good analogy is Netflix, which aggregates consumers worldwide to justify spending money on content. Data companies aggregate data buyers worldwide to justify spending money on data acquisition.

Example: “Priviconix”

Of course, there are a lot of ways to do data acquisition and they have different cost structures which affect your margins. Let’s say you want to build a company (“Priviconix”) that sells data on whether a company has a privacy policy on its home page. (by the way, this is a fictional example, but someone should start a company like this — I am happy to invest).

There might be a vendor that has already crawled the top 100,000 company web sites and can send you the data for $50,000 per year. Let’s say instead that you decide to do the crawling yourself. Let’s say it costs you $55,000 in salary to hire a crawler engineer. Some CEOs might be tempted to go with the $55k option because it will make her margins look better. But the reality is that the $50k purchase is a much better deal because it is a fixed cost and doesn’t require managing an employee. (And as you scale, that $50k cost might not even grow).

Of course, this depends on the model on sourcing the data. BD deals are really costly, but co-op models can be very low cost (in terms of cash) once they get to scale.

Getting to dominant market share (and leveraging acquisitions)

Once you have a flywheel going for a data company, you need to get to market share dominance in your niche. One way to get to 50% market share is to go after a very small niche and relentlessly focus on it. Then expand to the next niche.

Another way to dominate market share is via aggressive pricing. In the world of SaaS, this is usually a bad idea. But in the world of DaaS, once you have already paid for the data acquisition, your marginal cost of selling to one more customer is zero. So you can afford to be very aggressive on price to capture the market.

Once you have traction, a third lever to get to market share dominance is via acquisitions. SaaS companies often acquire to get new products or new customers. But DaaS companies have an additional opportunity to acquire direct competitors. These DaaS acquisitions can be extremely accretive because you can combine the data sets (making the data better) AND you can eliminate duplicate data acquisition costs.

The goal of getting to market share dominance is not to increase prices on your customers. On the contrary. The goal is to lower your CACs so that you can LOWER prices for your customers. By lowering prices, you make the data more accessible, which leads to more data buyers, which leads to more revenue to spend on data improvement, which creates a monthly compounding benefit for customers — meaning the dollar per data element drops by a minimum of 5% every month. Compounding is really key for data companies.

Data companies build an asset that becomes more and more important over time. But it is really hard for a DaaS company to get started. It is very unglamorous work initially. It takes a long time to build the flywheel.

Commoditizing Your Complement.

Like all businesses, data companies want to understand their complements and their substitutes. The substitute for a data company is usually another data company. But the complement for a data company is the software or analytics that use the data. In fact, if you are in the data-selling business, you can easily qualify your customers by finding out what software they use. If they use Snowflake, they are much more likely to be a data buyer than if they don’t.

Another thing to think about is how to commoditize your complement for data. There might be core data that everyone needs that you should consider giving away for free to attract customers. For instance, SafeGraph has a free download of Census Block Group data. To learn more about the commoditize-your-complement strategy check out the detailed posts by Joel Spolsky (more: Joel Spolsky) and Gwern.

Vertical versus Horizontal, number of data buyers, and growth of the DaaS market

Generally, most great SaaS companies sell into a specific industry. On the other hand, DaaS tends to be more horizontal. Data tends to be more horizontal than software. Compute, too, is horizontal. So are many API services.

This is because data is just a piece of the solution. It is just a component. It is an ingredient — like flour. Flour is used by bakeries, pizza parlors, home cooks, and many others.

SaaS (software) is the solution. SaaS companies solve problems. So they usually need to get down in the weeds of a specific industry to be successful.

Many DaaS companies sell their data not to the end user but directly to software companies. Most end users don’t want data, they want the answer. So software companies buy data, add their magic, and sell the solution to the end user. One interesting thing about the data market is that it historically has been a really bad market to sell into. But that is changing fast.

Example: hedge funds

Just five years ago, only about 20 of the 11,000 hedge funds were making use of large amounts of alternative data. Today, that number is over 500. Because the hedge fund industry is such a competitive and consolidating industry, incremental data can provide a huge advantage. Hedge funds always knew the power of alternative data. Today, that industry finds itself in a more mature place than other industries when it comes to data usage.

And this is not just a trend in hedge funds. The growth in data consumption looks the same in every industry.

Partially this growth is because people are recognizing the power of data. But most of it is due to the fact that the tools to use data are finally becoming available. SafeGraph’s customers benefit from Snowflake, Alteryx, ElasticSearch, and many other super powerful tools. New ML tools that make finding insights from large datasets easier are also contributing to the growth of the data market.

It used to be that only companies with the very best back-end engineers could glean insights from large data sets. Now, a semi-technical business analyst can do it.

Operational cadence of a Data as a Service (DaaS) company

Running a good data evaluation process.

Almost every potential customer of every data company will want to evaluate the data before making a purchase. This evaluation process can be a huge drag on a data company’s sales cycle. One way to accelerate data buying and evaluation is having either a freemium model or some sort of self-serve tool. SafeGraph has a simple self-serve offering (feel free to use the coupon code “SpringIntoSafeGraph” for $100 for free data). Once companies already use some data, they are pre-qualified (like a PQL — Product Qualified Lead) to buy more data.

Upsells are important long-term

If you are a data company and your customers are benefiting from your services (and they have assessed your data), the best way to grow is to sell them more data. You can do this by adding more rows (more coverage), more columns (more attributes), or more history.

The really important thing is that you maintain quality as you add SKUs. This is hard to do so better to have a few very high-quality SKUs than many low-quality ones.

Data agreements and how data is actually sold

Data can be sold in many dimensions. By volume, usage rights, SLA, and more. One thing that all data agreements have in them is specific rights for the buyer. These rights outline what the buyer can and cannot do with the data. (For instance, can they resell the data? Can they share it with their partners?).

Fraud, watermarking, and more

One of the problems with “data” is that it is easily copied. For centuries, mapmakers had to contend with other mapmakers stealing their maps. Today many data companies add watermarks to their data. Essentially they will mix in tiny bits of fake data into the real data so they can track it. Super sophisticated data companies will have different watermarks for each customer — so they can track exactly which customer leaked the data.

The per-seat model to using data

Many data companies do not actually sell data downloads (“data by the kilogram”) but instead sell SaaS applications on top of the data. This is usually a per-seat model. Getting data into a workflow can be really powerful. Alex MacCaw, CEO of Clearbit, often reminds me that “the data isn’t useful unless it’s in the place it needs to be. Thus building great integrations and being part of the user’s workflow is key.”

Your business model and approach will vary greatly depending on your dataset, partners, vertical, and users.

Software versus data.

Right now, most companies spend way more on software than they do on data. They also usually have more software engineers than data scientists. Alexander Rosen, GP at Ridge Ventures, mentioned “Will this be different in twenty years? I think it will.”

It is hard for data companies to get started because they are just data and not the full solution. But as the market matures, more and more companies will be able to consume data directly.

The good news is that as software (like Snowflake, etc.) gets more powerful, evaluating the data (data delivery) becomes easier. And as more people become data-literate, more people will want to buy the ingredients (data) rather than the pre-made meal (software).

Data Companies are the unsexy archivists

Working at a data company is like being an archivist at the Library of Congress. You know your job is important but it is often thankless. You have to be meticulous. You have to care about the details. Your mission is to help and support innovators.

There are very few monuments to archivists. They don’t win Nobel Prizes. They don’t write the Constitution. But without archivists, our history would be lost. And without data companies, our future will be less certain. Some people are naturally excited about the role of being an archivist. They are excited to be in the background supporting the heroes. (note: if you are excited about the mission to be an archivist, join us in a career at SafeGraph).

Appendix: Data Themes

“People” is a very common theme

People: data around a person. Data can be tied together with an email address, social security number, or some other key. This is one of the most common themes in the data business. It is also one of the most regulated.

Privacy of People Data

One of the problems with having a “People” theme is the great responsibility of protecting people’s privacy. As you get more data on people, you also open yourself to attacks from the outside (because data about people is valuable to hackers). One of the good things about data on people is it’s hard to access and not widely available (or regulated). This creates a barrier to entry for new competitors.

Truth is hard to assess

Of course, a HUGE problem with data about people is that it is very difficult for a customer to check if it is true. The overwhelming winds in the people data business have been moving in a direction that may make it harder for new entrants to compete.

“Products” theme

Another great theme is one on products (or SKUs). You can aim to cover all products (like the barcode) or a subset of products. Most of your electronics (like your smartphone, laptop, TV, etc.) carry a serial number that uniquely identifies that device. One example is R.L. Polk (now part of IHS Markit) which has traditionally collected data about cars using the Vehicle Identification Number (VIN).

Products are really important and they can be really niche. For instance, you can build a great winner-takes-most data business just on data about wine.

“Companies” has been a good business

Another good theme historically has been selling data about companies (or organizations). Dun & Bradstreet runs the DUNS number to uniquely identify a company. DUNS is used by many organizations (including the U.S. government, Apple, and others) as a global standard for business identity. Not only do many governments and organizations use the DUNS as a standard, but it is also often required for many business transactions. Another example of data tied to a company is the stock ticker (and all the financial data that joins to it).

“Places” is how you think about the physical world

One of the oldest forms of data is information about a place. Maps have been with us for millennia. Since maps of countries and cities don’t change that much, they are relatively static data sets. SafeGraph (where I work) focuses on information about Places. As of this writing (June 2019), SafeGraph has data on over 5 million places in the US. SafeGraph publishes its full schema — as you can see, everything is connected to a place (via the SafeGraph Place ID).

There are many other super successful place businesses. One amazing places business is CoStar (their market cap is over $20 billion as of this writing). They have detailed information on commercial real estate rentals (like price per square foot, occupancy, and more). CoreLogic sells data about residential properties (like last transaction price of a home, number of bedrooms, and more).

“Procedures” is bit different — it is instructions on how things are done

A “procedure” is data about a particular action. These are most common in the medical field. For instance, there is a specific code for every medical procedure (CPT codes). Procedures tend to be more complex data elements than people, places, companies, or products because they represent a series of events over time.